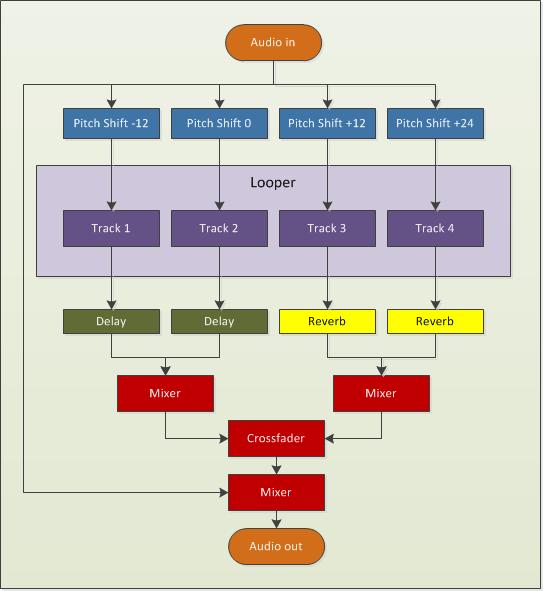

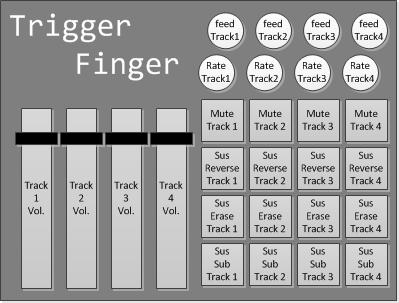

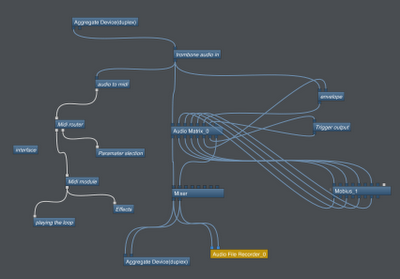

The recent release of version 2.0 of Mobius has spurred me to redesign my meta-trombone Bidule patch. Since I can have both the new and the old version in the same patch, my matrix mixer (and some of the most complex patching) can be eliminated by using both versions of the looper.

The first looper will be the one that is “played” by trombone notes. This is what I mean by playing the looper:

- trombone notes will trigger the loop playback from a position determined by the note value

- and/or trombone notes will change the playback rate relative to the note played

- and the amplitude of the loop will follow the trombone performance by using an envelope follower.

I’ll have a second instance of Mobius down the line that will resample the output of the first looper in addition to (or in the absence of) any incoming audio. Effects will be applied after audio in, after the envelope follower and after the resampling looper. I’ve yet to determine exactly what those effects will be, but the success of my vocal set patch leads me to consider a rather minimalist approach.

Speaking of minimalism, I’ve been listening to a lot of Steve Reich these days and I’d like to incorporate some phasing pattern play into my set for my upcoming performance at this year’s Y2K festival. One way to quickly create some interesting phasing composition is to capture a loop to several tracks at once and then trim some of the tracks by a predetermined amount. This can be easily accomplished with a script and I’ve been toying with some ideas along those lines.

Something else to which I’ve given some consideration is the development of midi effects to insert on the midi notes interpreted from the trombone performance. Some midi effects that would be easy to implement:

- midi note delay;

- arpeggiator;

- remapper (to specific key signature);

- transposer.

It will be interesting to see what impact these effects will have on the loop playback of the first looper. Another idea is to remap notes to parameter selection or note velocity to parameter value.

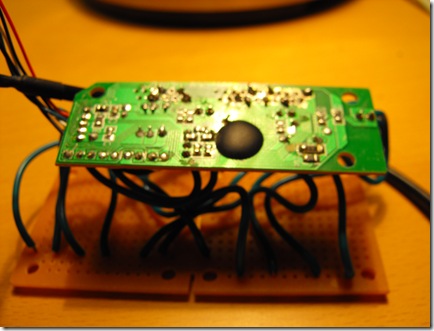

Another significant change is that I’ve acquired hardware to interpret midi notes from trombone performance. I’ve decided to go with the Sonuus I2M instead of my previously discussed approach mainly because I was wasting too much time try to make the ultrasonic sensor work properly. Bottom line, it wasn’t that interesting and I’d rather be playing music. My current plan is to use a contact microphone to feed audio to the I2M and to have a gate on the midi notes it generates in Bidule that I’ll activate with a footswitch.

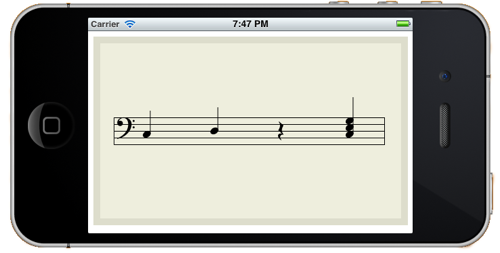

I’ll also be designing performance software for the iOS as I intend to attach an iPod touch to the trombone to serve as my heads-up display for various system states (updated wirelessly with OSC). I’ll be controlling the iPod with a Bluetooth page-turning footswitch. One pedal on the footswitch will change between different screens and the other pedal will activate an action available on that screen. For instance, on the notation screen, pressing the action pedal will display a new melodic line (either algorithmically generated or randomly chosen from previously composed fragments).

Now all I have to do is build it (and they will come… or more accurately, I will go to them).